April 28, 2026

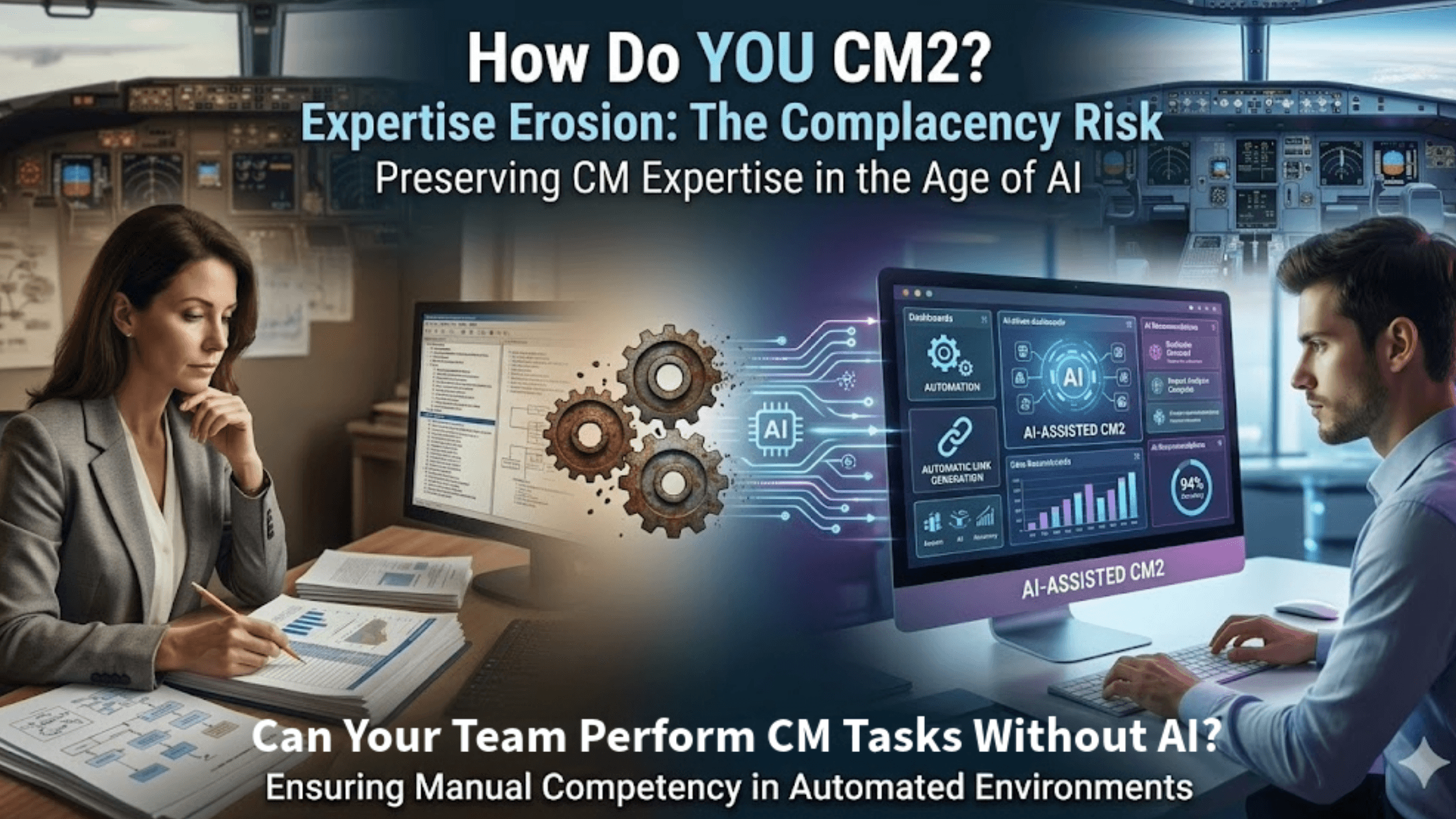

When AI handles duplicate detection, impact analysis, and traceability automatically, junior configuration managers never develop pattern-recognition skills by performing these tasks manually. The efficiency gains are real, but the cost manifests years later when organizations discover their CM professionals can’t perform critical analysis without algorithmic assistance.

Aviation already confronted this. Research on automation-induced skill fade shows that pilots who rely heavily on autopilot exhibit degraded manual flying skills. Recent studies show that pilots can lose manual-flying skills in as little as two months without practice.

A 2025 survey revealed even experienced flight instructors struggle with basic manual flying when automation is disabled. One senior instructor, an examiner, who was asked to fly manually, “had a tough time doing it.”

The same dynamic threatens configuration management. When AI consistently provides correct answers, humans stop questioning those answers, stop developing judgment to recognize when AI recommendations are wrong, and gradually lose expertise that makes human oversight valuable.

Consider requirements traceability. With AI generating trace links at 94% accuracy, reviews should be simple. However, a reviewer who manually creates links must evaluate semantic relationships and architecture, and recognize missed dependencies, whereas someone who only reviews AI suggestions relies on pattern matching: Is this reasonable?

Experienced configuration managers develop intuition about which changes need scrutiny, which stakeholders to engage early, and where documentation gaps reveal issues. This intuition comes from mistakes and experience, whereas AI that prevents errors can hinder learning from them.

Several approaches have emerged:

Graduated automation: Junior managers perform tasks manually before gaining AI access, developing pattern recognition before algorithmic support.

Periodic manual practice: Require manual requirements tracing for at least one component per quarter, ensuring the ability to perform core functions when AI isn’t available.

Explainable AI systems: When an AI system flags a potential impact between an ECU firmware change and thermal management, the explanation teaches a pattern they can apply independently.

Competency gates: Require demonstrating manual competency before using AI for critical functions.

The goal isn’t to prevent AI adoption, it’s to ensure AI enhances, rather than replaces, human expertise.

If your AI system went offline tomorrow, how many configuration managers could perform critical analysis manually – and how would you know before it’s too late?

What’s your approach to preserving expertise while adopting automation?

Use code Martijn10 for 10% off training—and don’t forget to tell them Martijn sent you 😉.

Copyrights by the Institute for Process Excellence

This article was originally published on ipxhq.com & mdux.net.